Already a member? Log in to the Member Site at members.mastery.org.

Admissions, Acceptance & Anticipation: Reflecting on Higher Ed Engagement

May 13, 2021

Progressing to Mastery: A Different Kind of Flex

June 9, 2021Feedback for Learning: An Assessment System Beyond Grades

Hi and welcome back!

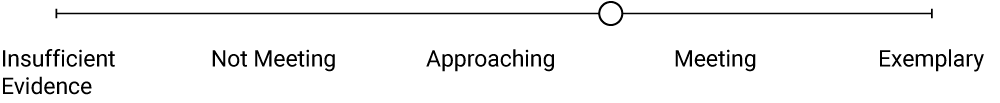

In this installment, I want to get to the meat of things: if assessment should be treated as feedback rather than measurement (see last blog), how do we do so without traditional “grades”in a mastery-based environment? The shift from measurement to qualitative feedback must be accompanied by ways to provide feedback in a growth-oriented manner, as well as interpret and communicate 'the level to which' a piece of evidence demonstrates the desired learning goals have been articulated. That phrase (the level to which) is very important.

How does this compare to a “grade”?

This is especially true of transdisciplinary goals as they are not “measurable” in a traditional way. If we’ve achieved some of the shifts outlined in earlier articles, we should have desired performance indicators (what we are looking for) and a way to help students explicitly demonstrate and provide evidence (what we are looking at) for these goals through creative assessment design. What we are missing is (how we are communicating): a means of providing consistent and appropriate feedback in a way that helps indicate performance and progress across a range of possible “indicators of quality”.

Throughout the use of empirical reference points (what we are looking for), we should be communicating and conversing with students regarding the level to which their demonstration of a desired learning goal (what we are looking at) indicates the qualities of mastery and the road to be traveled to develop in specific areas in the future. In other words, we need to consistently provide qualitative feedback that is clear, actionable and valid.

Many schools become mired in an overly complicated set of grading tools and language that prevents meaningful understanding of performance and growth and adds too many layers to allow anyone to connect learning and monitor growth consistently. There may end up being different rubrics for elements of critical thinking, for example, across different subject areas. Or, perhaps a series of task-specific rubrics that end up becoming one-offs, unconnected to other demonstrations of similar skills and dispositions. When this happens, an opportunity to align and simplify the assessment landscape has been lost.

This blog is purposed to provide a basis for professional dialogue about assessment structures built around the important learning goals contained within your Mastery Credit Architecture. The Mastery Transcript should be a desired outflow of an aligned assessment and feedback framework and not left for us to translate old metrics to populate a transcript (filling new boxes with old things). Metrics detailed below produce empirical data, instead of finite grades, that is relevant and consistent over time and across contexts. Through a system such as this, feedback data can be created and shared that will lead naturally to a valid Mastery Transcript.

Flattening the Assessment Feedback System

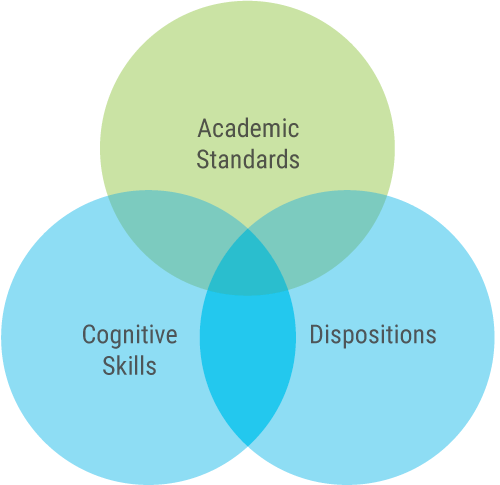

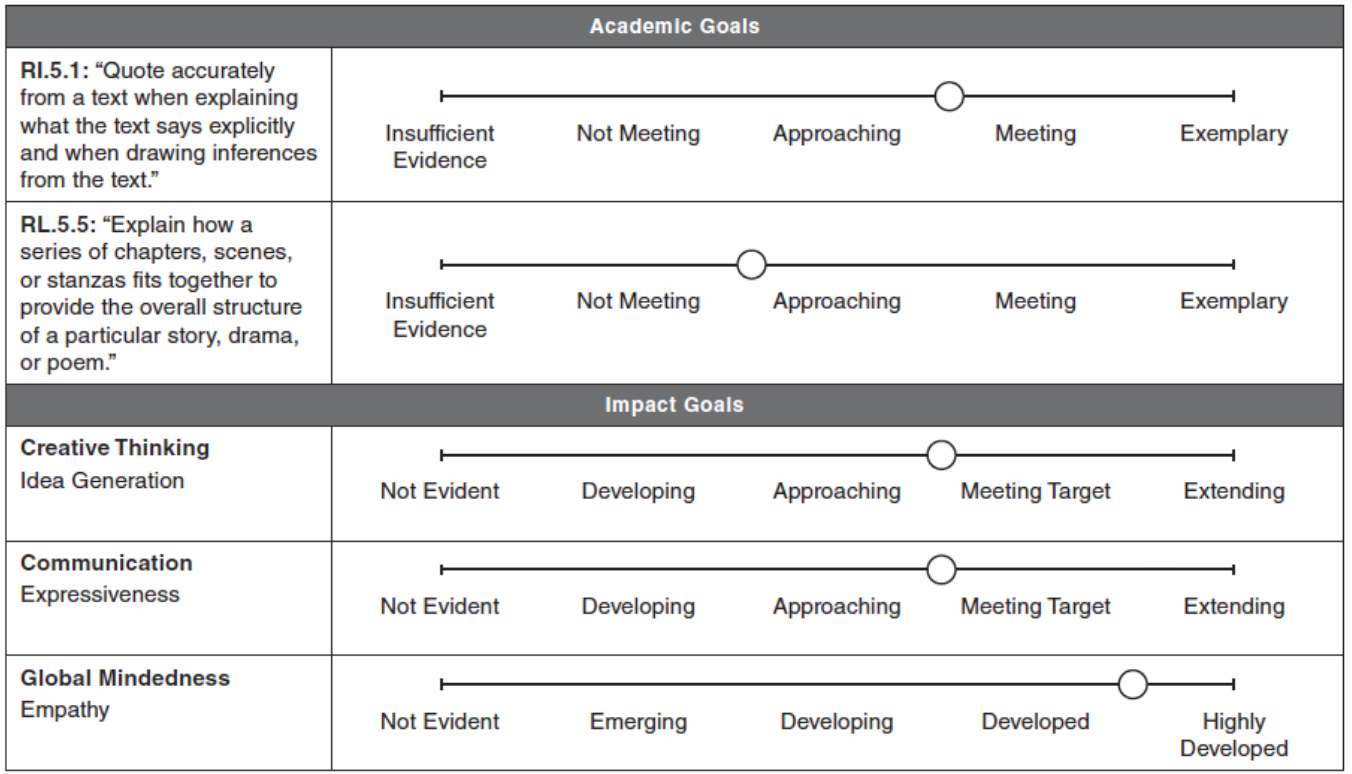

In borrowing from qualitative research methodology as we conceptualize our Mastery Transcript, we need to “code” our interpretation of qualitative evidence (what we are looking for in what we are looking at) for optimal understanding. Each demonstration of learning is unique, but perhaps there are ways that we can take simple and common approaches to coding for quality. Regardless of how your class progresses, you should “flatten” the environment for providing qualitative feedback for students to connect their learning across different contexts and over time. Simplify and flatten feedback metrics into three different types of learning goals: academic standards, cognitive skills and dispositions.

Source: Greg Curtis, Moving Beyond Busy, Solution Tree (2019)

These three simple areas of feedback with their associated quality scales (see below as we further unpack the levels to which) are universal for all subject areas and all grades. It streamlines the feedback landscape so that everyone in the learning community, especially students, can adopt this common language and we can provide the level of consistency necessary for learning to be connected across all contexts. Develop age-appropriate language for the scales below so that students can self-assess their evidence of learning using the same metrics. This will provide another layer to strengthen overall feedback and valuable points of conversation regarding the interpretation of evidence among students, teachers and other sources of feedback. You will find that the simpler the feedback lexicon the more you will be able to foster complex, layered learning via communication.

Furthermore, this framework recognizes that some feedback may, at times, focus on only one area, such as academic standards or cognitive skills or dispositions. These would represent formative feedback, as any task that only represents one domain would likely be “practice”. However, rich assessment design and, therefore, rich demonstrations of learning, reside in the areas of intersection between these three domains. These demonstrations represent “the game” (see the previous blog).

Feedback Scales for Academic Learning,

Cognitive Skills and Dispositions

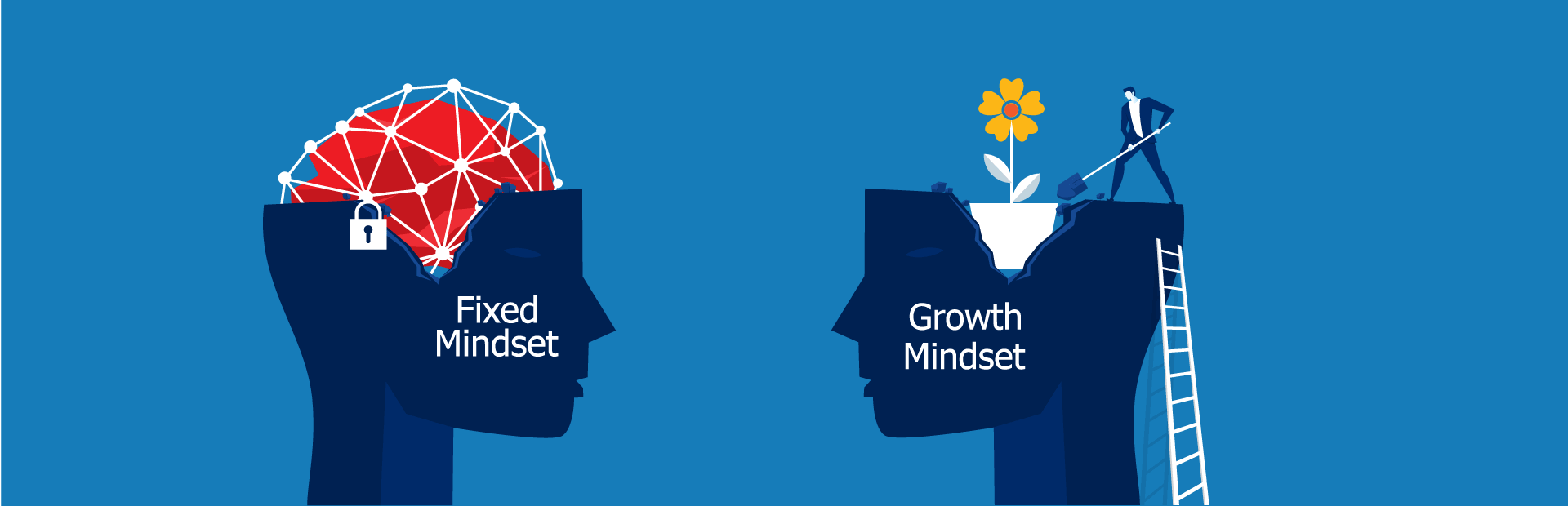

Cognitive skills require a level of proficiency with tools and strategies in order to be exercised at a high level within the context of achieving a challenging transfer task. Dispositions are different — we cannot realistically ask students to demonstrate proficiency in areas like resilience, empathy or growth mindset. “Proficiency” doesn’t really exist in the world of dispositions. Instead, our goal is development, where students move towards effectively embedding the disposition as part of their approach to learning and life.

Source: Greg Curtis, Moving Beyond Busy, Solution Tree (2019)

Schools should look at the transdisciplinary elements of their mastery domains as falling into cognitive skills or dispositions. Each of these types of learning goals are matchless and should be addressed with fidelity to their own characteristics. Below are examples of each.

| Cognitive Skills | Dispositions |

|---|---|

|

|

Source: Greg Curtis, Moving Beyond Busy, Solution Tree (2019)

A proficiency scale for cognitive skills would be optimal to provide frequent feedback on a student’s progress towards demonstrating mastery of each specific one.

| Not Evident | Developing | Approaching | Target | Extending |

|---|---|---|---|---|

| Elements of this skill have not been demonstrated. Note: This is not a "0", but, "Not Evident" is simply a "null" and not included in and consolidation of performance data |

Student applies this skill by following the provided or sample strategies and tools | Student consciously applies tools and strategies appropriate to the task. | Student adapts tools and strategies to apply these skills in novel situations. | Student extends and adapts tools and strategies when opportunity arises. |

| The student demonstrates some of the indicators in the target area. | The student consciously selects appropriate application of this skill that relate to many of the indicators in the target area. | Student demonstrates a secure level of performance that relate to many of the indicators in the target area. | Student demonstrates a level of performance beyond what is expected and uses this to be very successful at the task. | |

| In this example, “Target” indicates the desired level of demonstration and quality as articulated in the continua for that developmental band. It represents competency. | ||||

Source: Greg Curtis, Moving Beyond Busy, Solution Tree (2019)

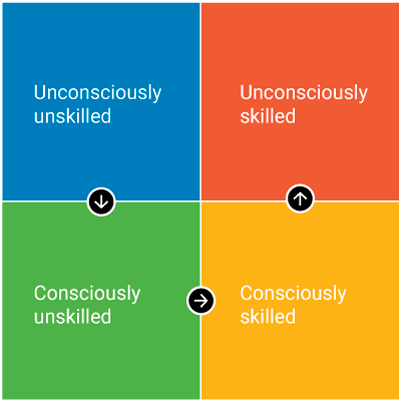

I referred to the work of Noel Burch who created the “Four Stages of Learning Any New Skill” in the 1970s in the development of the dispositions scale below. The four stages outlined in this model are:

The trajectory in Burch’s model, a move from unknowing to automaticity, reflects our developmental goal for dispositions.

| Not Evident | Emerging Unconsciously Undeveloped |

Developing Consciously Underdeveloped |

Developed Consciously Developed |

Extending Unconsciously Developed |

|---|---|---|---|---|

| Elements of this skill have not been demonstrated in the context of this task. Note: This is not a "0", but a "null"— the teacher has no evidence of the disposition. This score is not included in the consolidation of performance data. |

The student demonstrates a capacity for this disposition as part of his or her existing set of traits. | The student can see the supporting role of this disposition in specific contexts but cannot yet consciously apply to the task. | The student demonstrates this disposition as part of his or her conscious efforts to be successful at this task. | The student demonstrates this disposition at a highly developed and relatively automatic level. |

| This disposition is somewhat innate and undeveloped, and the student requires direct support. | This disposition is understood, but the studnet is yet to consciously apply tools and strategies. | The student applies tools and strategies for positive effect at a conscous level. | This disposition is firmly embedded as a positive personal trait, and the student relatively automatically transfers the disposition. | |

| The student demonstrates some elements of this disposition, but often with limited capability and without a great deal of conscious purpose. | The student can identify the need for this disposition, but is learning to consciously and effectively apply approaches to act in this area and succeed at the task. | The student may need limited support or direction to consciously engage this disposition. | The student enacts this disposition as part of seeking success, often automatically and effortlessly. | |

| In this example, “Developed” indicates the desired level of demonstration and quality as articulated in the continua for that developmental band. We can think of it as equivalent to competency. | ||||

Source: Greg Curtis, Moving Beyond Busy, Solution Tree (2019)

Richer Evidence-Based Feedback

Instead of holding formative assessment of siloed skills that do not move interdisciplinary mastery forward as benchmarks for learning, we now have a framework (the level to which) that allows us to provide specific, consistent feedback (how we communicate) to students on all elements of their work (what we are looking at) which contribute to success at a rich task or demonstration of learning (what we are looking for). Below is an example of what this might look like for the sample of a “grafted” assessment I shared in a previous blog.

Source: Greg Curtis, Moving Beyond Busy, Solution Tree (2019)

While mastery learning unlocks the students’ magical potential, it is not magic. My goal with this blog series is to furnish you with concrete tools in pulling back the curtain and manifesting it in fresh ways that work for your classroom environment. In my next piece, I will explore what David Perkins calls “moving beyond content” by aligning strategies to the transdisciplinary learning goals within your Mastery Credit Architecture.

Greg Curtis’ MTC Insights series also includes:

Article 1: Transforming Assessment, Curriculum, and BeyondArticle 2: What Students Must Learn

Article 3: Transforming Assessment, Curriculum, and Beyond, May 2020

Article 4: What is Assessment in the New Normal?